Scientific Rigor versus Rigor Posturing

An analysis of the claims about methodological rigor in Moss-Racusin et al (2012)

This is the second post in my Unsafe Science series on issues related to our reversal of Moss-Racusin, Dovidio, Brescoll, Graham and Handelsman (2012). Moss-Racusin et al (hence, “M-R”) found biases against women in science hiring; we performed a series of very close replication attempts and they did not merely fail, though they did fail, they produced a reversal: We found biases against men.

In doing the replication studies, we had to attend very closely to what was reported in the M-R paper. And when we did so, some of what we found was … strange. This post goes through some of that very strange stuff.

But first … some throat-clearing. By conventional academic standards, Moss-Racusin and her co-authors are very very successful. Kudos to them! Handelsman, for example, is a named chair at University of Wisconsin, heads an institute there, and was Obama’s Associate Director for Science at the White House Office of Science and Technology Policy.

This post is not about Moss-Racusin or her co-authors as humans, or about their careers writ large. It is just about this paper:

Rigor

Rigor is a thing in methodology and statistics. It typically involves taking steps to maximize data and inference quality. M-R had one strength — respondents were actual faculty. This is relevant because they evaluated applicants for a lab manager position, so in this sense, the study’s sample corresponded to those to whom they hoped to generalize their findings.

But everything else was methodologically pedestrian. Or worse (but I’ll get to that later in this post).

There were precious few markers of rigor in methodology, statistics, or practices. There were no adversaries brought on board for an adversarial collaboration. Their main measures had not been previously validated. They tested a single mediational model seeking to explain their results and tested no alternatives.1 Most of what M-R did were common, pedestrian practices in social psychology at the time. Not only were they not particularly rigorous, some are now known to be quite flawed (but that is beyond the scope of this essay, though some will be addressed in others in this series).

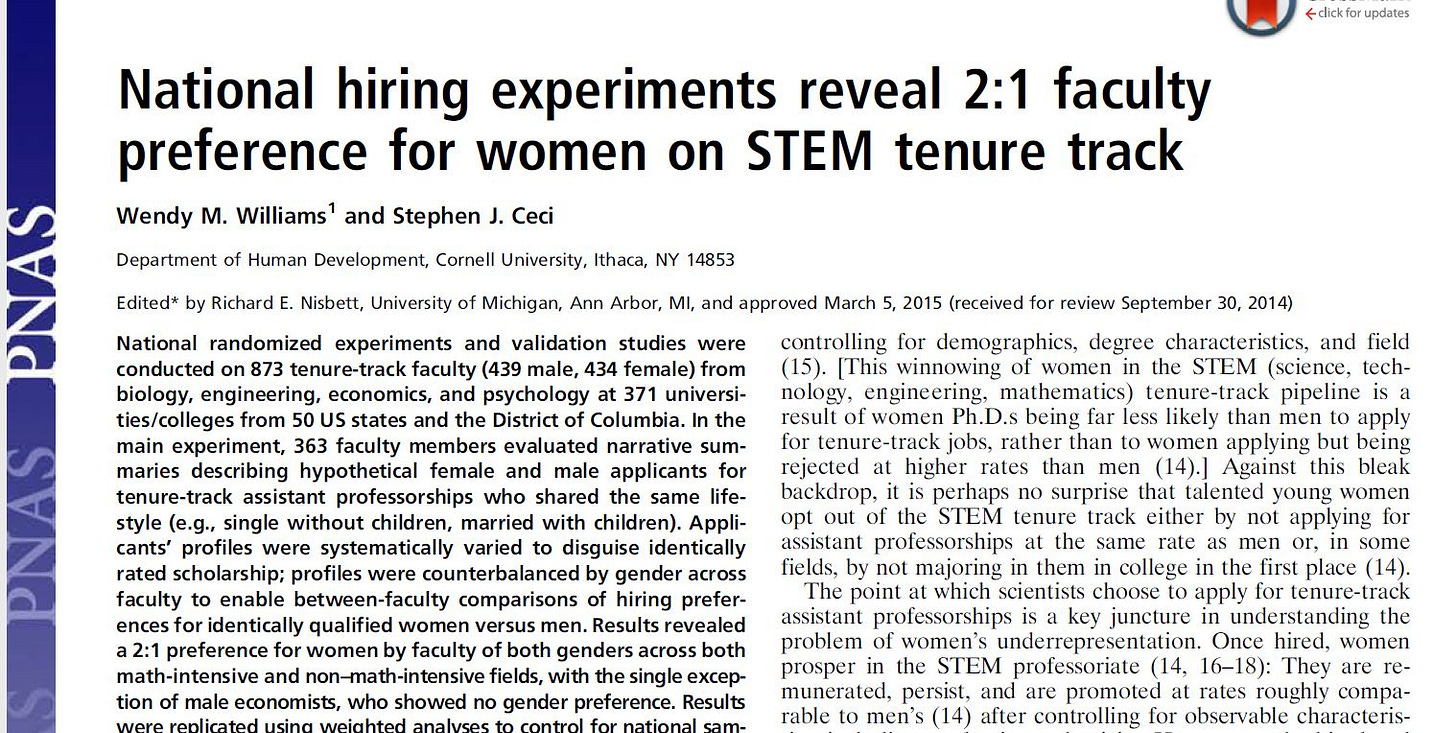

And the thing they studied — academics’ evaluations of a pretty good but not great applicant for a lab manager position — has relevance only to one of the most minor positions in academic science, contrary to, e.g., faculty positions or postdocs. And who hires “pretty good but not great” applicants, as opposed to the best applicant for the job anyway? Of course, one could craft a narrative about pipelines and biases more generally, but M-R produced no evidence that bore on any of this, though they did selectively cite or extol papers consistent with their narrative of sexist oppression.2 And not to put too fine a point on it, this came out a few years later:

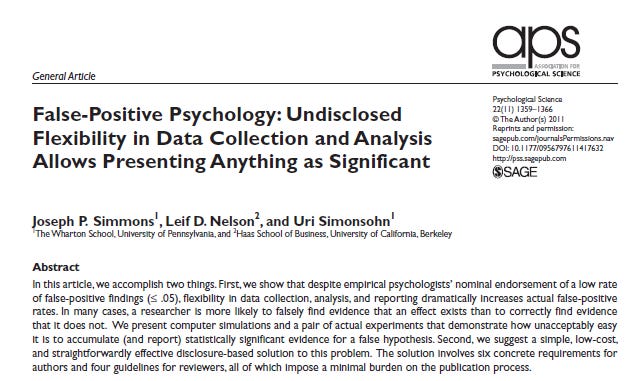

The M-R study was not methodologically any worse than most other social psychology studies of that era, it was mostly merely pedestrian — business as usual in social psychology. For a deeper dive on said business as usual:

~75% of Psychology Claims are False

In this essay, I explain why, if the only thing you know is that something is published in a psychology peer reviewed journal, or book or book chapter, or presented at a conference, you should simply disbelieve it, pending confirmation by multiple independent researchers in the future.

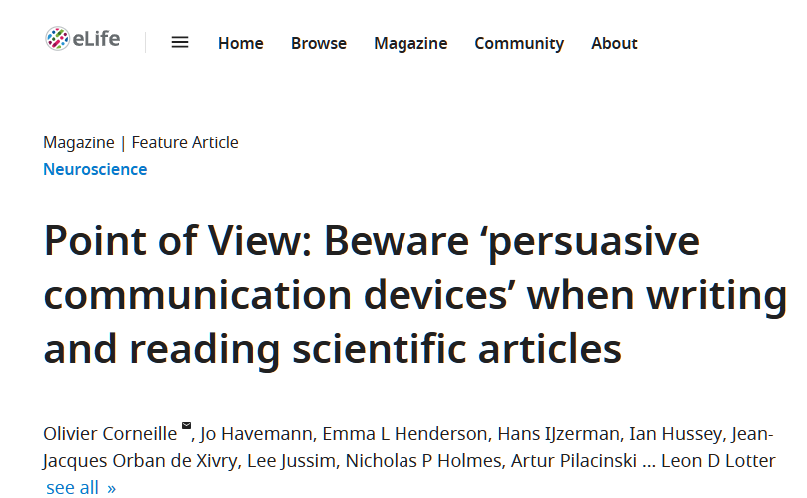

Rigor Posturing

Rigor posturing is also a thing. Rigor posturing involves framing the pedestrian things one does in a study as if they are markers of rigor, typically to persuade others (editors, reviewers, academic readers, politicians, reporters and op-ed writers, the wider public) that one has conducted an unusually credible study and anyone who does not believe it must be some sort of science denialist. As such, rigor posturing is a subset of the rhetorical techniques researchers use to make their work seem more impressive than it really is, something we addressed in this paper:

So let’s return to M-R and see how they framed the “rigor” of their study. They wrote:

However, to rigorously examine the processes underscoring faculty gender bias, we reverted to standard practices at this point by averaging the standardized salary variable with the competence scale items to create a robust composite competence variable (α = 0.86). This composite competence variable was used in all subsequent mediation and moderation analyses.

Salary (in dollars) and competence (which was itself the sum of three questions with scores ranging from 1 “not at all” [competent] to 7 “very much”) were measured on different scales, so they standardized the scores and averaged the standardized scores.3 There is nothing particularly “rigorous” or “robust” about this, though there is nothing wrong with it, either. Social psychologists average or sum scores from multiple questions all the time, and if responses to the individual items are on different scales (e.g., some responses go from 1-5, others 0-100, etc.) first statistically standardizing the individual items and then summing or averaging them is common practice. It is social psychology pedestrian business as usual. Well wait, maybe there is something wrong with it — referring to this as “rigorous” cannot be justified by any meaning of “scientific rigor” with which I am familiar.

Rigor Posturing 2.0. How Representative Was M-R’s Sample? Representative of Whom?

Framing one’s work as if one has a representative sample when one does not is another form of rigor posturing. Representative samples are sort of a gold standard in data collection in the social science, because collecting one means one has excellent sampling grounds for generalizing from one’s results to the wider population.4 So it is interesting to see whether M-R was written in such a way as to imply they had a representative sample. They wrote:

… we obtained the necessary power and representativeness to generalize from our results …

and

Indeed, we were particularly careful to obtain a sample representative of the underlying population…

Note the interesting phrasing, “representative of the underlying population.” What was the “underlying population”? Was it American STEM faculty? American faculty in biology, physics, and chemistry? American faculty at top tier research institutions in biology, physics, and chemistry?

At best, they were representative of the faculty in the 23 biology, physics and chemistry departments in the six universities they sampled from. No one else in all of the American academy had a chance of being selected. Having an equal chance (to that of every other person in the target population) of being selected is critical for representative sampling. Without it, no representative sample.

Here is what M-R wrote, in their supplement:

We sought to strategically select departments for inclusion that were representative of high-quality United States science programs. Thus, participants were recruited from six anonymous American universities, all of which were ranked by the Carnegie Foundation as “large, Research University (very high research productivity)”

Note the hedge. They did not write “We selected departments that were representative of high-quality U.S. science programs.” It is what they sought to do. If so, given what it takes to produce a representative sample, they failed miserably, which I will explain forthwith.

But first, they also wrote (also in the supplement):

… these demographics [of their sample] are representative of both the averages for the 23 sampled departments (demographic characteristics for the sampled departments were 78% male and 81% White, corresponding closely with the demographics of those who elected to participate), as well as national averages.

Its great that the demographics corresponded roughly to national averages. Definitely better than the alternative of not corresponding. But this is not in the same universe as a representative sample of faculty. Such samples can be obtained by giving every faculty member in the population of universities they targeted (high quality research universities) an equal chance of being selected for participation. M-R did nothing of the kind.

Long as one remembers that the “underlying population” is not American science faculty, its not American faculty in biology, physics and chemistry, and its not even American faculty at top institutions in biology, physics and chemistry, one will have an understanding of just now UNrepresentative the sample was. “The underlying population” is merely the biology, physics and chemistry faculty at the six institutions from whom they solicited participants. Whether their responses generalized to anyone at all beyond those six institutions was unknowable from M-R’s study.

What is a Representative Sample?

This review from The Sage Handbook of Online Research Methods, on e-mail and web based sampling methods, puts it this way:

Survey sampling can be grouped into two broad categories: probability-based sampling (also loosely called ‘random sampling’) and non-probability sampling. A probability based sample is one in which the respondents are selected using some sort of probabilistic mechanism, and where the probability with which every member of the frame population could have been selected into the sample is known.

Non-probability samples, sometimes called convenience samples, occur when either the probability that every unit or respondent included in the sample cannot be determined, or it is left up to each individual to choose to participate in the survey.

M-R did not have a representative sample of anything other than the three fields within the six universities that they sampled from. Now, they never wrote “our results generalize to faculty across the U.S.A.” Here is what they did write:

The present study is unique in investigating subtle gender bias on the part of faculty in the biological and physical sciences. It therefore informs the debate on possible causes of the gender disparity in academic science by providing unique experimental evidence that science faculty of both genders exhibit bias against female undergraduates.

I mean, the faculty were literally “in the biological and physical sciences.” But perhaps you can forgive a naive reader for not thinking through the implications of this statement. Would a reporter, academic outside of social science, or anyone else without expertise in methodological rigor or even with such expertise reading it superficially NOT interpret this to mean “our 127 faculty were in the biological and physical sciences”? Would, instead, they interpret it to mean their results generalized to faculty in … wait for it … its not just biological and physical sciences … ready … here it comes… “academic science”!!!???

It (the leaping to wild conclusions) gets worse, from M-R:

our findings raise concerns about the extent to which negative predoctoral experiences may shape women’s subsequent decisions about persistence and career specialization.

One study, then-unreplicated, N=127, 3 fields, 6 universities, a lab manager position, and now we are talking how “negative predoctoral experiences shape women’s decisions.”

Rigorous review would never have allowed claims of “representativeness” into the final published paper. So, in addition to the problems inherent to M-R this is a comment on the quality of peer review at the journal, PNAS, that published it.

The Power Analysis That Wasn’t

If all that wasn’t bad enough … hold on to your hats. Time for some

There is this very strange statement in the M-R paper:

Additionally, in keeping with recommended practices, we conducted an a priori power analysis before beginning data collection to determine the optimal sample size needed to detect effects without biasing results toward obtaining significance (SI Materials and Methods: Subjects and Recruitment Strategy) (48). Thus, although our sample size may appear small to some readers, it is important to note that we obtained the necessary power and representativeness to generalize from our results while purposefully avoiding an unnecessarily large sample that could have biased our results toward a false-positive type I error (48).

First, the statement is odd — it refers to “necessary power … to generalize” but power analysisis not about generalizing, its about the ability to detect effects and relationships. For a given effect size, research design, statistical analysis, and statistical significance threshold (p-level, typically .05), power (the ability to detect a true effect) increases as sample size increases.

Second, how do they know they had the “necessary power” if they did not conduct a power analysis? If they did conduct a power analysis why is it not reported in the paper? The supplement does provide this cryptic statement:

A power analysis indicated that this sample size exceeded the recommended n = 90 required to detect moderate effect sizes.

Very strange stuff. Reports of power analyses typically include lots more detail, something like, “A power analysis showed that, with a sample size of 254, a t-test would have 80% power to detect an effect size of d=.4 (the average effect size in social psychology).” Nothing like this appears in M-R. To be fair, statistical power was not well-understood by many social psychologists until the last 10 years or so. Of course, they could have written nothing rather than something both cryptic and wrong. Or maybe they were pressured into reporting a power analysis by a reviewer or editor, did not really understand power, and did the best they could (which was not very good). So was this rigor posturing, an innocent omission, or statistical incompetence? Who knows, but…

It gets worse, much worse.

“Purposely Avoiding an Unnecessarily Large Sample”

Best methodological practices are to use large samples, not small ones. They seemed to fundamentally misunderstand this when they wrote:

our sample size may appear small to some readers, it is important to note that we obtained the necessary power and representativeness to generalize from our results while purposefully avoiding an unnecessarily large sample that could have biased our results toward a false-positive type I error (48).

The idea that large samples are biased towards false positives5 is beyond wrong. The implication that “avoiding an unnecessarily large sample” somehow protects against false positives is beyond wrong. These claims are an inversion of how sample size relates to false positives. Their claim that “avoiding an unnecessarily large sample size” is somehow a “recommended practice” is deeply confused.

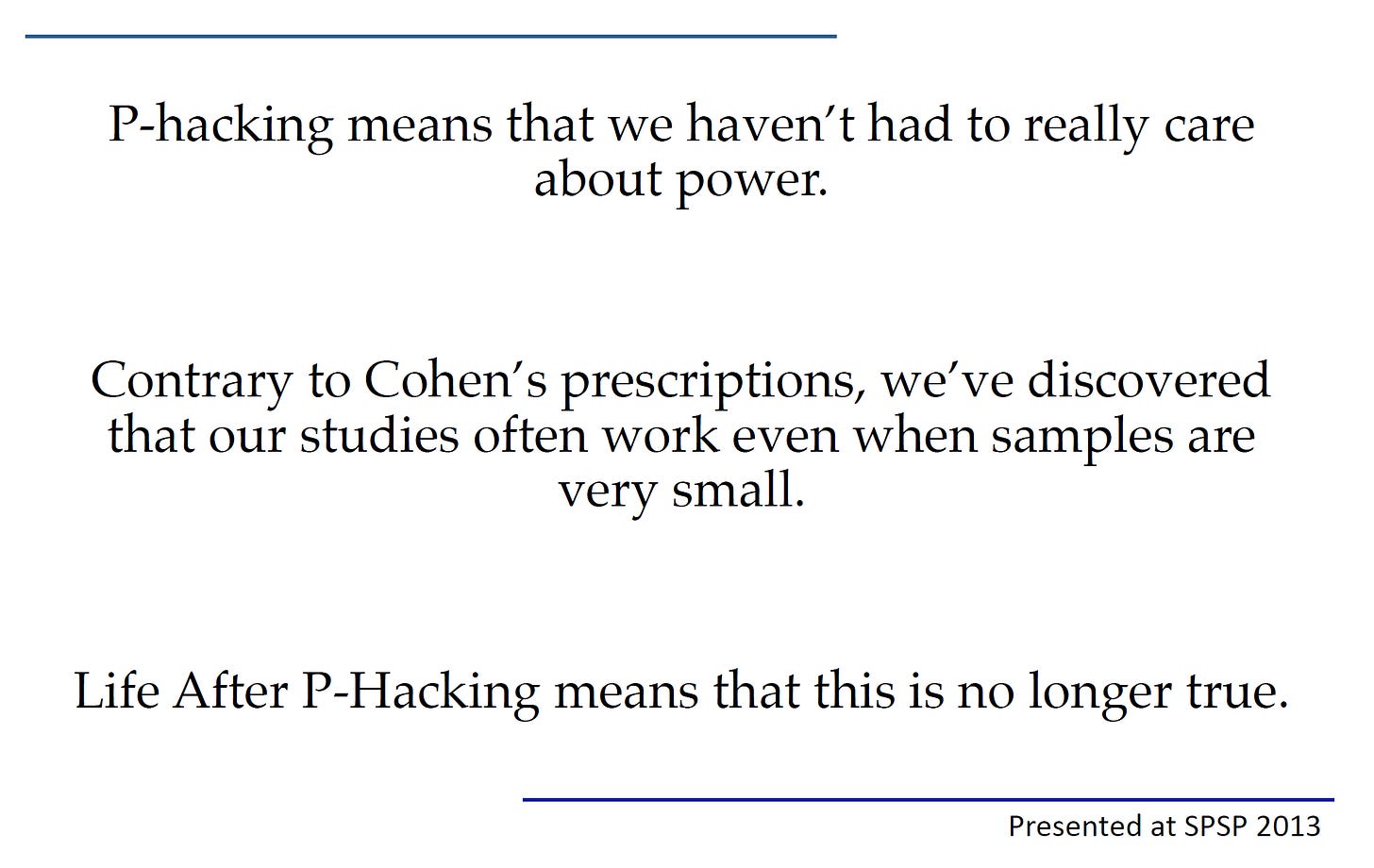

Small samples, especially when combined with suboptimal methods are notorious for producing false positives. The single best methodological protection against false positives is large samples, not small ones. Ironically, citation 48 in the quote above is to this famous paper:

Contra what M-R cited the above paper as supporting:

purposefully avoiding an unnecessarily large sample that could have biased our results toward a false-positive type I error (48)

there is nothing in the paper arguing that smaller sample sizes reduce false positives. It was apparent to anyone with a minimal understanding of this paper that the implication was for larger samples, not small ones. The same team made this point explicitly a couple of years later, at the conference of The Society for Personality and Social Psychology. Here is one of their slides:

Conclusions

M-R had one great strength: The participants were faculty evaluating applicants rather than people irrelevant to faculty hiring (undergraduates, samples of nonacademic adults, etc.). But otherwise, it had far more rigor posturing than actual rigor. It wasn’t any worse than other social psychology typical of that era, but, except for the sample, it was typical social psychology. Do with that what you will.

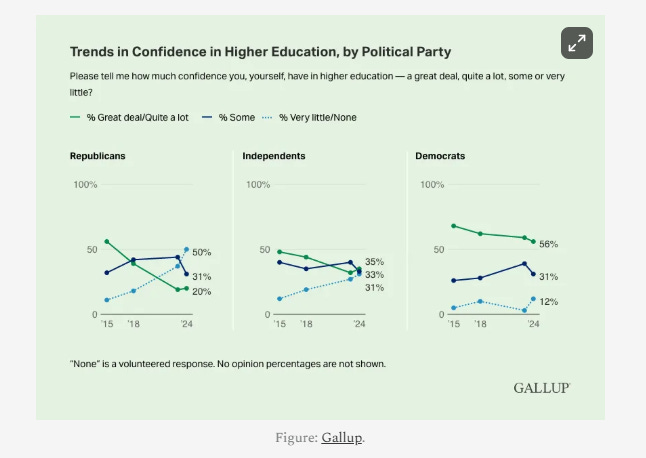

The extraordinary fanfare the M-R study received, when juxtaposed against its many weaknesses (including, in addition to those listed here, a complete absence of discussion of the study’s weaknesses and limitations), was symptomatic of many of the dysfunctions of academia, especially in the social sciences. Small studies that others fail to replicate. Obsession with “oppression.” Leaping to melodramatic conclusions. Calling those who express skepticism about the research sexists (yes, this occurred in several different ways, more on this in a subsequent post). Rigor posturing more than rigor. This is emblematic of why the credibility of science, academia, and experts has been declining.

Commenting

Before commenting, please review my commenting guidelines. They will prevent your comments from being deleted. Here are the core ideas:

Don’t attack or insult the author or other commenters.

Stay relevant to the post.

Keep it short.

Do not dominate a comment thread.

Do not mindread, its a loser’s game.

Don’t tell me how to run Unsafe Science or what to post. (Guest essays are welcome and inquiries about doing one should be submitted by email).

Related Posts

Footnotes

They did test their main model in two different ways, but did not test models making different assumptions about causal relationships.

M-R never used the term “oppression.” However, they did write this:

the current results suggest that subtle gender bias is important to address because it could translate into large real-world disadvantages in the judgment and treatment of female science students.

Averaging standardized scores. For my nonexpert readers, without getting all statsy and mathsy, standardizing responses to individual questions in a survey is a way to put responses measured in different units (say, height and weight or self-reported Presidential vote and party identification) into the same unit, so they can be combined.

Representative sampling provides excellent grounds for generalizing to the target population. That is true, but restricted to sampling. If the study sucks in other ways — bad measures, suboptimal statistics, etc. — it can still suck and not really generalize to anyone. Even representative sampling can be suboptimal if the sample is small, as in the M-R study. Its not “bad,” exactly, but small samples mean there is a wide uncertainty interval around any obtained results.

False positives, for the statistically uninitiated. A false positive, in this context, occurs when research finds something “statistically significant” (seeming to indicate there is a real effect or relationship) even when there is no effect or relationship (which can subsequently be revealed when the work cannot be replicated by independent teams). Its called a “false positive” because the apparent finding of a “postive” (real) effect is, in fact, “false” (the effect is not real).

Wow. Although I think power and power analysis was well understood in social b-4

10 years ago.